#include "Python.h"

#define NPY_NO_DEPRECATED_API NPY_1_7_API_VERSION

#include "arrayobject.h"

#include <iostream>

#include <iomanip>

#include <cmath>

#include <cfloat>

#include "mkl_rci.h"

#include "mkl_types.h"

#include "mkl_service.h"

using namespace std;

struct argstruct {

double *xval;

double *yval;

};

double lorentz(double *p, double x) {

return p[2] / (1.0 + pow((x / p[0] - 1.0), 4) * pow(p[1], 2));

}

void residuals(MKL_INT *m, MKL_INT *n, double *p, double *F, void *data) {

MKL_INT i=0;

struct argstruct *bdata;

double x, y;

bdata = (struct argstruct *) data;

for (i=0; i<(*m); i++) {

x = bdata->xval[i];

y = bdata->yval[i];

F[i] = y - lorentz(p, x);

}

}

static PyObject *mkloptimizer(PyObject *self, PyObject *args) {

PyArrayObject *xval, *yval, *eps0, *p0;

argstruct adata;

double *fvec, *fjac, *p, *eps, r1, r2, res, rs=0.25;

int count=0;

MKL_INT iter1 = 100000;

MKL_INT iter2 = 100000;

MKL_INT RCI_Request, successful, info[6], iter, st_cr, n, m, i;

_TRNSP_HANDLE_t handle;

if (!PyArg_ParseTuple(args, "O!O!O!O!", &PyArray_Type, &xval, &PyArray_Type, &yval, &PyArray_Type, &eps0, &PyArray_Type, &p0)) {

return NULL;

}

m = PyArray_SHAPE(xval)[0];

n = PyArray_SHAPE(p0)[0];

adata.xval = (double *) PyArray_DATA(xval);

adata.yval = (double *) PyArray_DATA(yval);

eps = (double *) PyArray_DATA(eps0);

p = (double *) PyArray_DATA(p0);

// Initialize fvec and fjac arrays

fvec = new double [m];

fjac = new double [m*n];

for (i=0; i<(m*n); i++) {

if (i<m) {

fvec[i] = 0.0;

}

fjac[i] = 0.0;

}

PySys_WriteStdout("Initializing Intel's MKL optimizer ... ");

if (dtrnlsp_init(&handle, &n, &m, p, eps, &iter1, &iter2, &rs) != TR_SUCCESS) {

PySys_WriteStdout("FAILED\n");

MKL_Free_Buffers();

PyErr_SetString(PyExc_AssertionError, "Initialization error\n");

return NULL;

} else {

PySys_WriteStdout("SUCCESS\n");

}

PySys_WriteStdout("Checking optimizer inputs ... ");

if (dtrnlsp_check(&handle, &n, &m, fjac, fvec, eps, info) != TR_SUCCESS) {

PySys_WriteStdout("FAILED\n");

MKL_Free_Buffers();

PyErr_SetString(PyExc_AssertionError, "Check optimizer inputs.\n");

return NULL;

} else {

PySys_WriteStdout("SUCCESS\n");

}

RCI_Request = 0;

successful = 0;

PySys_WriteStdout("\nBegin nonlinear least squares algorithm\n");

PySys_WriteStdout(" Iter p[0] p[1] p[2] Residual\n");

while (successful == 0) {

if (dtrnlsp_solve(&handle, fvec, fjac, &RCI_Request) != TR_SUCCESS) {

PyErr_SetString(PyExc_AssertionError,"Error encountered while searching for solution");

MKL_Free_Buffers ();

return NULL;

}

if (RCI_Request < 0) {

PySys_WriteStdout("Algorithm terminated\n");

successful = 1;

}

if (RCI_Request == 1) {

residuals(&m, &n, p, fvec, (void *) &adata);

res = 0.0;

for (i=0; i<m; i++) {

res += pow(fvec[i],2);

}

PySys_WriteStdout("%5d % 2.4f % 2.4f % 2.4f %2.6f\n", count, p[0], p[1], p[2], sqrt(res));

count += 1;

}

if (RCI_Request == 2) {

if (djacobix(residuals, &n, &m, fjac, p, eps, (void *) &adata) != TR_SUCCESS) {

PyErr_SetString(PyExc_AssertionError,"Error encountered in calculation of the Jacobian\n");

MKL_Free_Buffers();

return NULL;

}

}

}

PySys_WriteStdout("\nRetrieving solver's results:\n");

if (dtrnlsp_get(&handle, &iter, &st_cr, &r1, &r2) != TR_SUCCESS) {

PyErr_SetString(PyExc_AssertionError,"Error encountered in retrieving results\n");

MKL_Free_Buffers ();

return NULL;

} else {

PySys_WriteStdout("Number of iterations:\t\t%4d\n", iter);

PySys_WriteStdout("Initial residual:\t\t%2.18f\n", r1);

PySys_WriteStdout("Final residual:\t\t%2.18f\n", r2);

PySys_WriteStdout("Parameter values:\t\t(%2.6f, %2.6f, %2.6f)\n",p[0],p[1],p[2]);

}

delete [] fjac;

delete [] fvec;

return Py_BuildValue("(d,d,d)", p[0],p[1],p[2]);

}

static PyMethodDef pyMKLoptmethods[] = {

{"mkloptimizer", mkloptimizer, METH_VARARGS, "Documentation:"},

{NULL, NULL, 0, NULL}

};

PyMODINIT_FUNC initpyMKLopt(void) {

Py_InitModule("pyMKLopt", pyMKLoptmethods);

import_array();

}

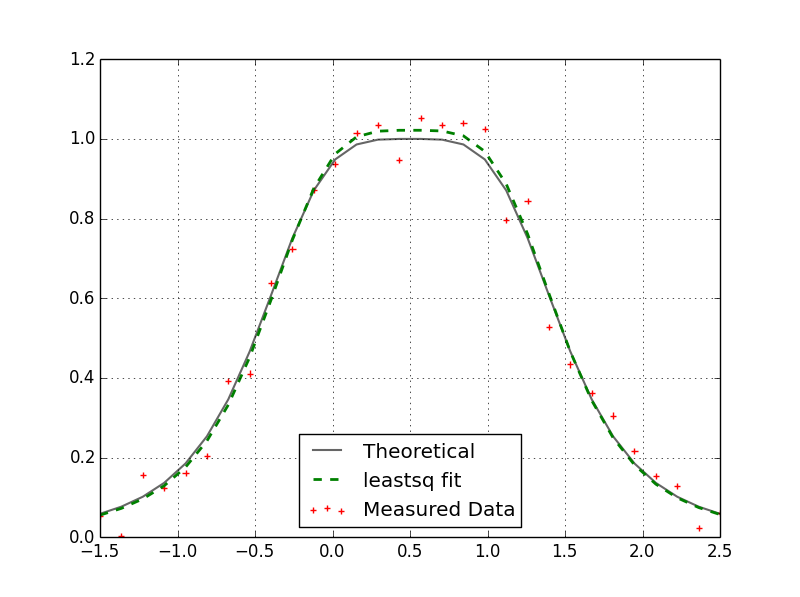

Once compiled in Visual Studio you can import the resultant module into Python as follows:

import numpy as np

import pyMKLopt

np.random.seed(22)

DBL_EPSILON = np.spacing(1.0)

def lorentz(p,x):

return p[2] / (1.0 + (x / p[0] - 1.0)**4 * p[1]**2)

p = np.array([0.5, 0.25, 1.0], dtype=np.double)

x = np.linspace(-1.5, 2.5, num=30, endpoint=True)

noisyy = lorentz(p,x) + (np.random.randn(30) * 0.05)

p0 = np.array([1.0, 1.0, 1.0], dtype=np.double) #Initial guess

eps = np.array([1e-8, 1e-20, DBL_EPSILON, 1e-20, 1e-20, DBL_EPSILON], dtype=np.double)

solution = pyMKLopt.mkloptimizer(x, noisyy, eps, p0)

print '\n\n',solution

The output of this script (displayed in the console) is:

Initializing Intel's MKL optimizer ... SUCCESS

Checking optimizer inputs ... SUCCESS

Begin nonlinear least squares algorithm

Iter p[0] p[1] p[2] Residual

0 1.0000 1.0000 1.0000 1.521464

1 0.5798 -0.1432 0.8439 1.151126

2 0.4988 -0.2203 0.9402 0.354709

3 0.5090 -0.2644 1.0182 0.248959

4 0.5071 -0.2661 1.0216 0.248171

5 0.5071 -0.2662 1.0217 0.248171

6 0.5071 -0.2662 1.0217 0.248171

7 0.5071 -0.2662 1.0217 0.248171

8 0.5071 -0.2662 1.0217 0.248171

Algorithm terminated

Retrieving solver's results:

Number of iterations: 8

Initial residual: 1.521463582532435900

Final residual: 0.248170645865868120

Parameter values: (0.507083, -0.266186, 1.021697)

(0.5070833881680623, -0.26618567803609183, 1.0216970905916452)

As you can see it yields the same results (don't worry about the negative sign on p[1] - it's value is squared). I want to compare this optimizer (which uses the trust-region algorithm) versus the levmar routine. In practice I have found the levmar routine works quite well but there are scenarios when using boundary conditions where the optimizer gets "stuck". I get around this by performing a transformation that removes the need for boundary conditions. I will test this MKL optimizer to see how it performs in those situations.